Blog

RSS Feed

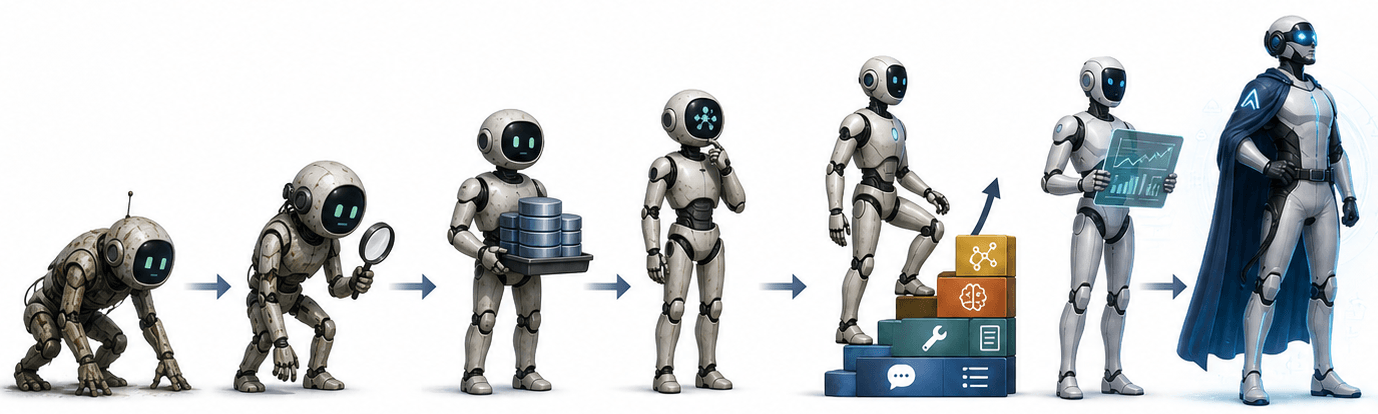

Continuously Improving Agents

Continuous improvement for agents requires a flywheel that observes behavior at scale, curates traces into datasets, diagnoses failures, picks the cheapest intervention, evaluates candidates, and redeploys safely. Agent failures are behavioral and silent, not the loud exceptions traditional software produces, so the discipline lies in the weighting policy that resolves metric conflicts, the lineage that connects production traces to release decisions, and the rollback stack that covers prompts, tools, indices, and policies rather than just model weights. The companies that win the agent decade won't be the ones with the cleverest prompt or biggest fine tune; they'll be the ones whose learning infrastructure turns fastest.

May 4, 2026Blog Post by ilian Herzi, Gabriele Sorrento

18 min read

RAG, RL, and the Judge You Need Before Either

This post presents a practical framework for diagnosing and fixing AI agent failures by distinguishing between forgetfulness (context problems solved by RAG) and poor reasoning (behavior problems solved by RL), with LLM judges as the diagnostic layer connecting both.

Apr 24, 2026Blog Post by ilian Herzi

6 min read

Playbook: Build Agents That Actually Don't Break

A comprehensive playbook for building reliable AI agents, covering the five essential pillars: Model Context, Guardrails, Graceful Fails, Observability, and Continuous Improvement—with specific tools and strategies for each.

Apr 21, 2026Blog Post by Gabriele Sorrento, ilian Herzi

12 min read

Silverstream AI: Achieving 95% Agent Reliability with Agent Root Cause Analysis

Discover how Silverstream AI solved the 'black box' problem of agent deployment, using root cause analysis and semantic clustering to diagnose failures at scale and achieve 95% agent reliability.

Feb 13, 2026Case Study by Gabriele Sorrento

5 min read

Beyond Keywords: Accelerating eDiscovery

Legal teams waste billions on manual document review while missing 80% of relevant evidence. AI-powered multimodal semantic search eliminates the bottleneck, finding critical documents instantly without seed set bias or training phases.

Dec 6, 2025Blog Post by Gabriele Sorrento

4 min read

Agentic Annotations

Manual annotations don't scale. Agentic Annotations automate data labeling after just a few examples, helping AI teams move from prototype to production in days instead of months, without the human bottleneck.

Aug 22, 2025Blog Post by Interpret AI

4 min read

Data scale is NOT all you need

Blindly scaling datasets puts AI companies at risk. Learn why intelligent data curation—not just more data—is the key to building safer, more effective AI models and how to properly select training data.

Jul 21, 2025Blog Post by ilian Herzi

17 min read